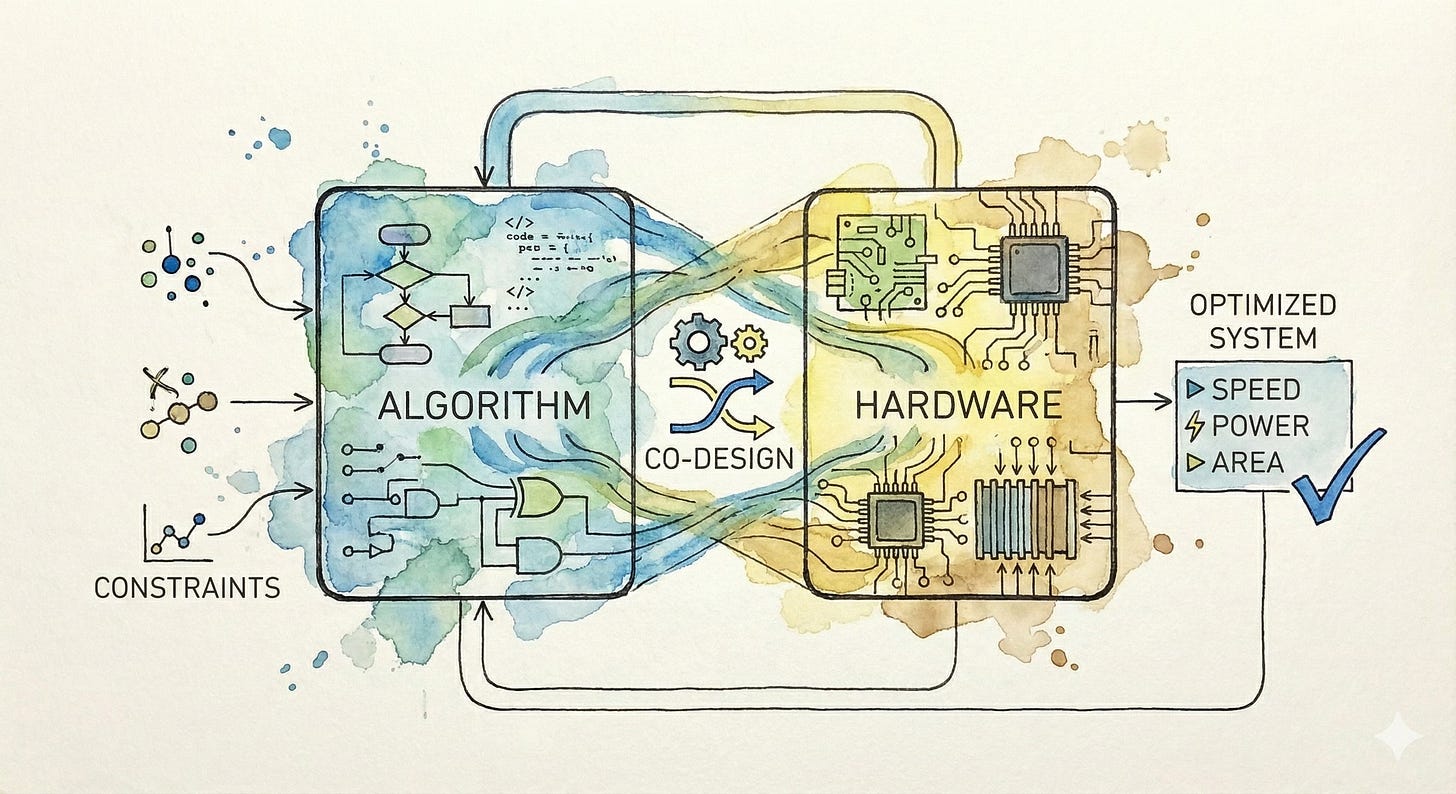

Algorithm-Hardware Co-Design

Flash Attention and beyond..

In 2022, FlashAttention introduced something interesting: it made transformer inference and training 2-4x faster across language models, vision transformers, and multimodal architectures without changing a single line of model code.

No architectural modifications, no approximations, just faster.

The secret? Understanding how GPUs actually work and designing the algorithm around hardware constraints rather than ignoring them.

Flash Attention represents a broader shift happening in AI development. We’re moving past the era where algorithms and hardware should be evolving independently. There are impactful innovations to come from co-designing both together, and in acknowledging that the theoretical elegance of an algorithm matters as much as how it actually executes on silicon.

If you’re working on model architectures or optimization, understanding these co-design principles really isn’t optional anymore. The gap between theoretically efficient algorithms and practically fast implementations has become too large to ignore.

A Case Study in Memory-Aware Design

The standard attention mechanism has a simple problem that creates massive practical headaches. To compute attention for a sequence of length N, you need to materialize an N×N attention matrix. For a 4K token sequence, that’s 16 million elements. For 128K tokens, it’s over 16 billion elements. Each needs to be written to and read from GPU memory.

GPUs have a memory hierarchy. High Bandwidth Memory (HBM) sits off-chip with large capacity but relatively slow access. SRAM sits on-chip with tiny capacity but 100x faster access. Standard attention implementations write intermediate results to HBM because they don’t fit in SRAM. This creates a memory bottleneck where the GPU spends most of its time waiting for data rather than computing.

Flash Attention solves this through tiling and recomputation. Instead of computing the entire attention matrix at once, it breaks the computation into blocks that fit in SRAM. Each block is processed completely on-chip, computing softmax and the attention output without writing intermediate attention scores to HBM. The algorithm recomputes some values rather than storing them, trading a small amount of extra computation for massive reductions in memory traffic.

The results are striking. At 2K sequence length, Flash Attention achieves 10x memory savings. At 4K, that becomes 20x. These aren’t just memory savings. Because memory access is the bottleneck, reducing memory traffic directly translates to 2-4x speedup in wall clock time for both training and inference across GPT-style language models, BERT-style encoders, and vision transformers like ViT.

Flash Attention 2: Parallelizing Differently

Flash Attention 2 optimized further by reconsidering how work gets parallelized across GPU threads. The original implementation parallelized over batch size and number of attention heads. Flash Attention 2 additionally parallelizes over the sequence length dimension by processing different parts of the keys and values simultaneously.

This change exploits hardware characteristics of modern GPUs more effectively, improving work distribution across streaming multiprocessors. The result is another 2x speedup over the original on A100 GPUs, reaching around 230 TFLOPs with FP16/BF16 (compared to A100’s theoretical 312 TFLOPs for this precision).

Flash Attention 3: Hardware-Specific Optimization

Flash Attention 3 takes co-design even further by targeting specific hardware capabilities of NVIDIA’s Hopper architecture (H100 GPUs). Three key innovations extract additional performance:

Asynchronous execution: Hopper introduces new asynchronous instructions for Tensor Cores (WGMMA) and memory access (TMA). Flash Attention 3 uses warp specialization to overlap computation and data movement. While one group of threads loads data from memory, others perform computation on previously loaded data. This pipelining keeps the hardware continuously busy rather than alternating between compute and memory phases. The PyTorch blog post on Flash Attention 3 provides an accessible explanation of these optimizations with diagrams.

Interleaved operations: On H100, matrix multiplication achieves 989 TFLOPs but special functions (like the exponentials needed for softmax) only reach 3.9 TFLOPs. This means softmax can consume 50% of execution time. Flash Attention 3 interleaves softmax computation with matrix multiplication, performing softmax while tensor cores are busy with matrix operations rather than sequentially.

FP8 with incoherent processing: Flash Attention 3 supports FP8 low precision, but naive FP8 quantization produces large numerical errors in attention due to outliers. The solution uses “incoherent processing” that applies a Hadamard transform with random signs before quantization. This spreads outliers across the representation, making quantization errors more uniform and manageable. The result is FP8 attention with 2.6x lower error than standard per-tensor quantization.

These optimizations push Flash Attention 3 to 740 TFLOPs with FP16 (75% of H100’s theoretical maximum) and close to 1.2 PFLOPs with FP8. This is 1.5-2x faster than Flash Attention 2 on Hopper GPUs, achieved through deep understanding of the microarchitecture. The official implementation is available on GitHub.

Systems Design Meets Algorithms (PagedAttention)

Flash Attention optimizes the attention computation itself.

PagedAttention, implemented in vLLM, tackles a different hardware constraint: memory management during inference. During inference, the KV cache grows dynamically as tokens generate. Traditional systems allocate contiguous memory blocks for each sequence. This creates three types of waste:

Internal fragmentation: Systems allocate memory for the maximum possible sequence length but most sequences are shorter, leaving allocated memory unused.

External fragmentation: As sequences of varying lengths complete, they leave gaps of unusable memory between allocated blocks.

No memory sharing: When multiple outputs generate from the same prompt (like beam search or parallel sampling), each maintains its own KV cache even though they share input context.

Traditional systems waste 60-80% of KV cache memory. On a 40GB A100 running LLaMA-13B, the model parameters consume 26GB, leaving only 14GB for KV cache. With 70% waste, you’re effectively using less than 5GB for actual inference.

PagedAttention draws inspiration from operating system virtual memory. It divides the KV cache into fixed-size blocks (pages). Logical blocks map to potentially non-contiguous physical blocks through a block table. As tokens generate, new physical blocks are allocated on demand. Memory waste only occurs in the last partially filled block of each sequence, reducing waste to under 4%.

The memory savings enable larger batch sizes, which directly improves throughput. vLLM with PagedAttention achieves 2-4x higher throughput than systems without it, reaching 24x improvements over naive HuggingFace implementations for large language model inference. The vLLM architecture deep dive provides implementation details, and the open source code is available.

Principles of Effective Algorithm-Hardware Co-Design

These examples reveal patterns that apply broadly when co-designing algorithms and hardware:

1. Understanding Memory Hierarchy Is Critical

Modern accelerators have complex memory hierarchies. H100 has an 80GB HBM3 with ~3 TB/s bandwidth and 50MB of SRAM with ~100 TB/s bandwidth per SM (here’s the Hopper architecture whitepaper for full specs). Algorithms that ignore this hierarchy and repeatedly access slow memory create bottlenecks. Effective co-design structures algorithms to maximize fast memory usage and minimize slow memory traffic.

Flash Attention excels because it fits computations in SRAM. Similarly, PagedAttention eliminates memory fragmentation that prevents efficient use of available memory. Neither algorithm changes what gets computed - instead they alter how data moves through the memory hierarchy.

2. Hardware Constraints Can Inspire Better Algorithms

Co-design isn’t just about making algorithms faster on existing hardware. Sometimes hardware constraints reveal better algorithmic approaches. PagedAttention’s block-based memory management wasn’t just an optimization. It enabled memory sharing across requests that would be impractical with contiguous allocation, making complex sampling strategies like beam search much more efficient.

Similarly, Flash Attention’s recomputation strategy (trading compute for memory) only makes sense in the hardware context where memory is the bottleneck. On hardware with different characteristics, you’d design differently.

3. Asynchrony and Parallelism Require Hardware-Specific Thinking

Modern accelerators have deeply asynchronous execution models with multiple execution units operating independently. Flash Attention 3’s warp specialization exploits this by having different thread groups handle different tasks simultaneously.

Taking advantage of asynchrony requires thinking about algorithms differently than sequential pseudo-code suggests. You need to consider what can execute concurrently, how to partition work across execution units, and how to hide latency of slow operations behind fast ones.

4. Precision and Numeric Format Are Design Choices

Choosing numeric precision isn’t just about accuracy versus speed. Different hardware supports different numeric formats with vastly different performance characteristics. H100’s FP8 tensor cores can reach 1.9 PFLOPs, nearly double FP16’s 989 TFLOPs.

Effective co-design considers numeric format as a first-class design choice. Flash Attention 3’s incoherent processing makes FP8 practical for attention by solving the outlier problem. Similar co-design opportunities exist for integer quantization, block floating point, and other numeric formats, but each requires algorithm adaptations to maintain accuracy.

Co-Design Beyond Attention

While Flash Attention and PagedAttention focus on transformer inference, co-design principles apply broadly:

Quantization-aware training: Rather than training in full precision and quantizing later, quantization-aware training incorporates quantization into the training process. This produces models that maintain accuracy at low precision by learning to work within hardware quantization constraints.

Sparse operations: GPUs can be optimized for sparse matrix operations, but only if sparsity follows structured patterns (like 2:4 sparsity where 2 of every 4 values are zero). Algorithms designed for unstructured sparsity don’t accelerate well. Co-designed approaches either constrain training to produce hardware-friendly sparsity patterns or develop specialized hardware for flexible sparsity patterns the algorithms naturally produce.

Fused operations: Frameworks like PyTorch’s compile mode and JAX’s JIT compilation can fuse multiple operations into single kernels, reducing memory traffic. But fusion opportunities depend on algorithm structure. Algorithms designed with fusion in mind (minimizing data dependencies, grouping compatible operations) enable more aggressive optimization. NVIDIA’s CUTLASS library provides templates for building fused CUDA kernels and is used in Flash Attention 3’s implementation.

Memory-bound versus compute-bound operations: Different layers in neural networks have different computational characteristics. Large matrix multiplications are compute-bound. Normalization layers and activations are memory-bound. Co-design considers this heterogeneity, potentially using different precision or even different hardware for different layers.

Implications for Researchers and Practitioners

If you’re developing new model architectures or algorithms, these co-design principles have practical implications:

Profile early and understand bottlenecks: Don’t assume you know where the bottleneck is. Profile your implementation on target hardware. Is it memory bandwidth? Compute? Specific operations like softmax? The optimization strategy differs dramatically based on the actual bottleneck.

Learn your target hardware: You don’t need to become a GPU architecture expert, but understanding basic characteristics matters. Know the memory hierarchy. Understand what numeric formats are supported and their performance characteristics. Be aware of special capabilities like tensor cores or asynchronous execution.

Design with data movement in mind: In most modern AI workloads, data movement costs more than computation. When designing algorithms, think about how data flows through memory. Can intermediate results fit in fast memory? Can you recompute instead of storing? Can operations be reordered to improve memory access patterns?

Consider numeric format as part of the design: Don’t treat precision as an afterthought. Different hardware has different sweet spots for numeric formats. Designing algorithms that work well at lower precision from the start is easier than retrofitting later.

Collaborate across the stack: The most effective co-design happens when algorithm developers and hardware engineers communicate. If you’re designing models, talk to the people deploying them. If you’re building hardware, understand what algorithmic patterns are common. The boundary between hardware and software is where innovation happens.

The Future of Co-Design?

As we move toward more diverse AI workloads (multimodal models, long-context understanding, real-time agents), co-design becomes even more critical. Hardware is diversifying beyond GPUs. Specialized accelerators, photonic chips, and neuromorphic processors each have unique characteristics that algorithms need to accommodate.

The next generation of breakthrough AI systems won’t just be better algorithms or better hardware. It'll be algorithms and hardware designed together, each informing the other. Flash Attention and PagedAttention are early examples of what this looks like. The principles they demonstrate apply far beyond attention mechanisms - understanding memory hierarchies, exploiting hardware asynchrony, managing memory efficiently, and choosing numeric formats carefully.

For researchers pushing the boundaries of what is possible with AI, understanding these principles isn’t just about optimization. It’s about expanding what kinds of models are practical to deploy, what scales are achievable, and what applications become feasible. The hardware more than just the platform your algorithm runs on, it’s part of the design space.

As always, all opinions are my own and do not reflect those of any employer or funding agency.

This is brillant synthesis of where algorithm design is heading. The way you connect FlashAttention's memory-aware design to broader co-design principles really clarifies why so many supposedly "efficient" models still underperform in production. What's particularly insightful is how recomputation becomes cheaper than memory access - that inverson of the traditional tradeoff fundamentaly changes how we should think about algorithmic complexity. The progression from Flash 1 to 3 shows that hardware-specific optimization isn't a one-time thing but an ongoing conversation between silicon capabilities and algorithm structure.